AI content tools move fast, but ethical mistakes can damage your brand permanently. Copyright lawsuits, biased imagery, and deceptive practices have already caused real harm to companies that moved too quickly without guardrails. Smart brands don't avoid AI—they use it responsibly with clear guidelines that protect their reputation and build audience trust. This guide provides practical, actionable steps to create ethical AI content without slowing down your workflow.

Understanding the Real Risks

AI ethics isn't abstract philosophy—it's business risk management. Three concrete dangers threaten brands using AI without safeguards:

- Copyright exposure: Generating content that infringes on artists' styles or reproduces trademarked characters can trigger legal action

- Reputational damage: Publishing biased or stereotypical imagery alienates customers and invites public backlash

- Trust erosion: Passing off AI content as human-created or real events misleads audiences and damages credibility

These risks are manageable with deliberate practices—not fear. The goal isn't perfection; it's thoughtful implementation that aligns AI use with your brand values.

1. Copyright: Creating Original Work That Respects Creators

Copyright law around AI is evolving, but core principles remain clear: don't reproduce protected works, and don't impersonate living artists. Your safest path is creating genuinely original content inspired by broad styles rather than specific creators.

What to avoid:

- Artist name-dropping: Prompts like "in the style of [Living Artist]" risk style infringement claims. Many platforms now block these prompts entirely.

- Trademarked characters: Generating Mickey Mouse, Pikachu, or other protected characters violates intellectual property rights regardless of "transformative" claims.

- Direct reproduction: Uploading copyrighted images to "remix" or "restyle" often violates terms of service and copyright law.

Safe alternatives that work:

- Use movement-based descriptors: Instead of "Van Gogh style," try "post-impressionist brushwork with visible texture and swirling patterns"

- Reference historical periods: "Art Deco geometry," "Bauhaus minimalism," or "1980s Memphis Group patterns" are safe style anchors

- Create original characters: Build distinctive brand mascots rather than adapting existing IP

- Verify commercial rights: When using AI platforms, confirm their training data and output licensing terms before commercial use

Practical workflow: Before publishing any AI-generated image, ask: "Could someone reasonably mistake this for a specific artist's work or trademarked character?" If yes, regenerate with more generic style terms.

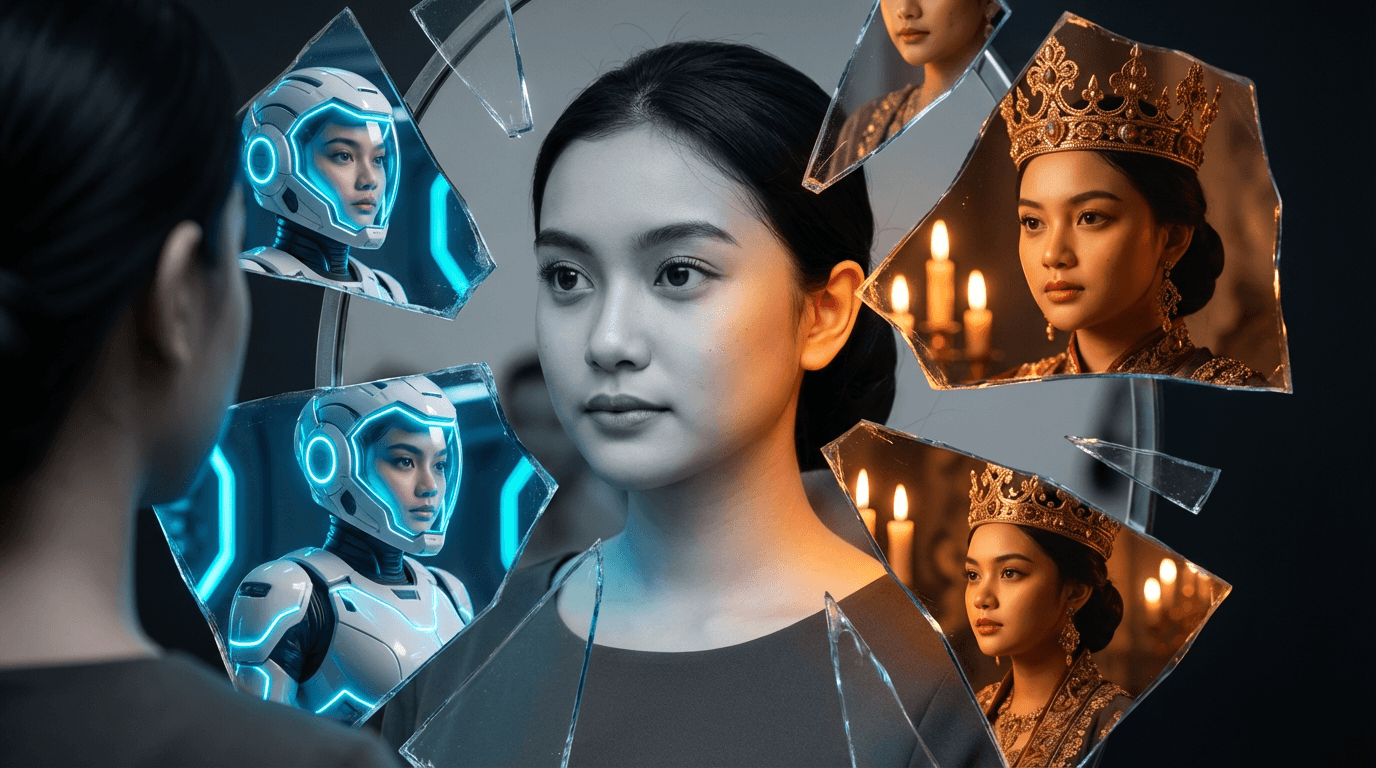

2. Bias Mitigation: Creating Inclusive Content That Represents Reality

AI models learn from internet data—which contains society's biases. Without intervention, they'll overrepresent certain demographics, reinforce stereotypes, and create homogeneous imagery. This isn't just ethically problematic; it limits your brand's connection with diverse audiences.

Proactive prompting techniques:

- Be explicitly inclusive: Instead of "business meeting," prompt "diverse business team including women, people of color, and different ages collaborating"

- Avoid stereotypical pairings: Don't default to "male engineer" or "female nurse"—specify "female mechanical engineer" or "male pediatric nurse" when relevant

- Specify diversity dimensions: Include age range, body types, abilities, and cultural markers when appropriate to your audience

- Context matters: For global brands, ensure imagery reflects local demographics of target markets

Human review checklist: Before publishing, verify that AI outputs:

- Represent your actual customer base demographics

- Avoid reinforcing harmful stereotypes (e.g., only showing women in domestic settings)

- Include people with disabilities when relevant to context

- Depict cultural elements respectfully and accurately

"AI won't fix society's biases—it amplifies them. Your responsibility isn't to make AI unbiased (impossible with current technology) but to actively correct its outputs through intentional prompting and human review."

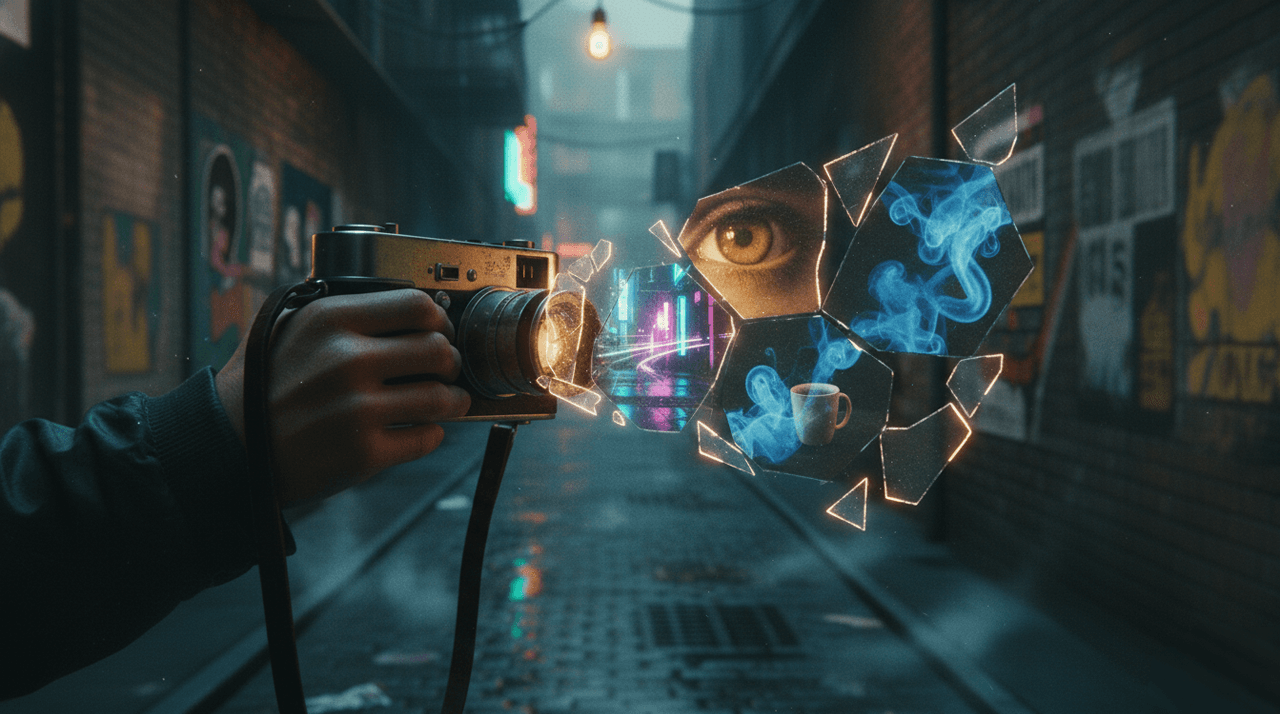

3. Transparency: Building Trust Through Honest Communication

Deception destroys trust faster than any other brand mistake. When AI content misleads audiences about reality or origin, the backlash is swift and severe. Transparency isn't optional—it's brand insurance.

When disclosure is essential:

- Simulated events: If showing AI-generated images of disasters, protests, or news events, clearly label as "simulation" or "illustration"

- Product representation: Don't show AI-generated "photos" of products that don't exist yet without disclosure

- Human representation: If using AI avatars as "spokespeople," disclose they are digital creations

- Platform requirements: Follow platform-specific rules (Instagram's AI label, TikTok's synthetic media policies)

Practical disclosure approaches:

- Subtle labeling: Small "AI-generated" text in corner for artistic content

- Contextual disclosure: Caption note: "Visualizing concept with AI" for product development

- Platform tools: Use built-in AI labels on Instagram, Facebook, and TikTok

- Website footers: "Some visuals created with AI assistance" on relevant pages

Never automate publishing: Implement a mandatory human review step before any AI content goes live. This catches errors, bias issues, and inappropriate outputs that automated systems miss.

Building Your Brand's AI Ethics Checklist

Turn principles into practice with a simple pre-publishing checklist:

AI Content Safety Checklist

- ☐ No recognizable living artists' styles or names in prompt

- ☐ No trademarked characters or logos generated

- ☐ Demographics reflect target audience diversity

- ☐ No harmful stereotypes reinforced in imagery

- ☐ Disclosure added where required by context/platform

- ☐ Human reviewer approved final output

- ☐ Content aligns with brand values and voice

Make this checklist part of your content workflow. For teams, require checklist completion before publishing AI-generated assets.

When Things Go Wrong: Damage Control Protocol

Mistakes will happen. How you respond matters more than the error itself:

- Remove immediately: Take down problematic content without defensiveness

- Acknowledge publicly: "We published AI-generated imagery that misrepresented [issue]. We've removed it and are updating our review process."

- Explain correction: Briefly state how you'll prevent recurrence

- Don't over-apologize: Sincere acknowledgment > performative groveling

Brands that handle mistakes transparently often emerge with stronger trust than those who never erred.

The Business Case for Ethical AI

Ethics isn't just "the right thing to do"—it's smart business:

- Risk reduction: Avoid lawsuits, platform bans, and PR crises

- Audience trust: 85% of consumers say transparency about AI use increases trust (2025 Edelman data)

- Talent attraction: Purpose-driven teams prefer ethically conscious employers

- Long-term viability: Regulatory frameworks are coming—early adopters of ethical practices will lead

Responsible AI use isn't a constraint on creativity. It's the foundation for sustainable innovation that your audience will support long-term.

Ready to create AI content that builds trust instead of risking it? Implement ethical AI practices in your workflow today.